Day 50 of 50 Days of Python: Building an End-to-End Data Pipeline and Reporting Dashboard in Python.

Part of Week 8: Final Project and Wrap-Up

Welcome to the last day of what has been a really fun series to create and serve to 800+ subscribers! Honestly, it’s been a really good experience getting to know more than 20 of you who messaged me off the back of this series asking for mentoring, help and guidance around all things data and Python.

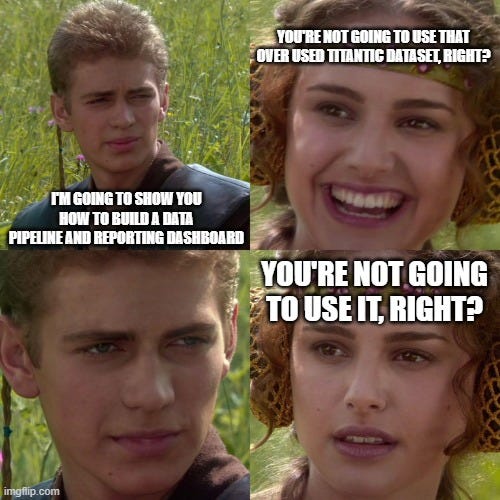

Today is a little different, it’s all about taking a look at code that contains all you need for building an end-to-end data pipeline using the dreaded Titanic dataset. I did say in my last post we would be doing this off the interactions and views based on this series. However, I may have spoken too soon and it seems like unless you have access to my account this isn’t actually possible. So apologies about that.

But I want to close this series with something actionable and real-world. So, here’s how you can take everything we’ve covered, from wrangling messy data, to visualising it, to building dashboards and put it together in one workflow that actually delivers value. By the end of this post, you’ll know exactly how to:

Load, clean, and transform data

Orchestrate your pipeline (with a proper script)

Surface the results in a slick, interactive dashboard

Do all of this with nothing but Python and a few libraries you’ve used throughout the last 50 days.

So, with that all said, let’s get into it, one last time.

The Dataset: Titanic

If you’re reading this, you’ve almost certainly seen the Titanic dataset before. It’s the go-to for every “getting started with data” course, and for good reason it’s got a bit of everything: categorical variables, missing data, easy-to-understand context, and a binary classification target. It’s also dead simple to get hold of via Kaggle or direct download.

But this time, we’re not just going to poke around in a notebook. We’re going end-to-end—raw data, pipeline, to dashboard.

Pipeline Overview

The pipeline for today’s project has 4 main parts:

Ingest: Grab the Titanic CSV, either from a local file or a remote source.

Clean & Transform: Deal with missing values, encode categorical variables, and engineer a couple of features to make our analysis more interesting.

Analytics: Aggregate, slice, and dice the data so we’ve got something meaningful to show off in a dashboard.

Dashboard: Use Streamlit (or your preferred Python dashboarding library) to make an interactive web app where users can explore the data themselves.

Step-by-Step: Building the Pipeline

3.1. Data Ingestion

We’re keeping it simple. Here’s a starter function to load the data:

import pandas as pd

def load_titanic_data(filepath='titanic.csv'):

return pd.read_csv(filepath)

Download the Titanic dataset if you haven’t already (just Google “Titanic CSV dataset”). Save it in your working directory.

3.2. Data Cleaning & Transformation

This is where the magic (and pain) happens. Here’s what we’ll do:

Fill missing age values with the median

Fill embarked with the most common value

Encode sex and embarked as numbers

Engineer a “FamilySize” column

def preprocess(df):

df['Age'].fillna(df['Age'].median(), inplace=True)

df['Embarked'].fillna(df['Embarked'].mode()[0], inplace=True)

df['Sex'] = df['Sex'].map({'male': 0, 'female': 1})

df['Embarked'] = df['Embarked'].map({'S': 0, 'C': 1, 'Q': 2})

df['FamilySize'] = df['SibSp'] + df['Parch'] + 1

return df

3.3. Analytics Layer

Now, let’s add some basic aggregations you might want to surface:

def generate_metrics(df):

survived = df['Survived'].mean()

avg_age = df['Age'].mean()

pclass_counts = df['Pclass'].value_counts()

return {

'Survival Rate': survived,

'Average Age': avg_age,

'Pclass Counts': pclass_counts

}3.4. Dashboard Time!

This is where we get interactive. I’m using Streamlit for its simplicity and ease of deployment (seriously, you can get something up and running in 5 minutes).

Install Streamlit first if you don’t have it:

pip install streamlitAnd here’s the basic app skeleton:

import streamlit as st

def main():

st.title("Titanic Data Dashboard")

df = load_titanic_data()

df = preprocess(df)

st.header("Dataset Overview")

st.write(df.head())

st.header("Survival Metrics")

metrics = generate_metrics(df)

st.write(f"Survival Rate: {metrics['Survival Rate']*100:.2f}%")

st.write(f"Average Age: {metrics['Average Age']:.2f}")

st.write("Passenger Class Counts:")

st.bar_chart(metrics['Pclass Counts'])

st.header("Filter by Sex")

sex_filter = st.radio("Select Sex:", ("All", "Male", "Female"))

if sex_filter != "All":

sex_val = 0 if sex_filter == "Male" else 1

filtered_df = df[df['Sex'] == sex_val]

st.write(filtered_df)

st.write(f"Survival Rate for {sex_filter}: {filtered_df['Survived'].mean()*100:.2f}%")

else:

st.write(df)

if __name__ == "__main__":

main()To run the dashboard:

streamlit run your_script.pyNow you’ve got an interactive reporting dashboard for the Titanic dataset, all written in Python, and you can tweak it as you see fit. Try adding filters for age, passenger class, or embarkation point and see how the survival rates shift.

Wrapping Up (and Next Steps)

And just like that, 50 days of Python come to a close. If you’ve followed along even for just a handful of posts, you’ve now got a solid base in the language, an understanding of the data workflow, and you can put together a full data pipeline that ends in an actual dashboard.

As the title says this is purely Python based, but there is a lot you can do in between this code like:

Connect to a database via pyodbc and push it directly into a table.

Create dashboards via AWS quicksight, Databricks and PowerBI depending on what your tech stack.

Set up dynamic deployment to dev, test and prod via environment variables and scheduled via Azure DevOps, Github Actions and Jenkins.

A Note of Thanks

Thank you again to everyone who has read, shared, or reached out during this journey. It’s been genuinely rewarding to see so many people level up their skills, ask smart questions, and push themselves to learn more. Python is only the beginning.

If you want more, don’t be a stranger, drop me a message, or keep an eye out for what’s next (hint: there WILL be a next!).

Happy coding. And remember: keep building.

A small project created on the basis of the code provided in the post:

https://github.com/AdityaGarg1995/TinyProjects/blob/main/Data%20Reporters/Titanic%20Dataset%20Reports%20Creator/Titanic_Dataset_Reports_Creator_Streamlit.py

I have added bar graphs for Survival Metrics based Passenger Class, Sexes, and Embarking Ports.

Please note that streamlit's own graphs only have a vertical text orientation for x-axis labels. So alternate libraries like altair, seaborn or matplotlib are required. My codebase uses Altair.

Thank you Mr. Kindred for this wonderful post and project idea for programmers and data analysts alike.

Leaving the below information for any interested readers:

* inplace=True is deprecated in pandas, and will no longer work after future releases. Hence, please assign the values to dataframes rather than using inplace argument

* Different sources have different Titanic Datasets. The one used here seems to be from https://github.com/datasciencedojo/datasets/blob/master/titanic.csv or other similar source.

Stanford University provides a different Titanic Dataset.